How Long Do Yelp Reviews Take to Post

Business owners are frequently frustrated to detect positive reviews from their customers filtered out by Yelp. Why does this happen, and what tin be done well-nigh it?

If you're a concern owner or manager, there'due south a decent chance that y'all've spent some time obsessing over your Yelp reviews. (If you're not paying attention to your reviews, you lot should be—digital PR is a pretty significant facet of Internet marketing.) And if that's the case, so you've probably fumed over every positive review that'south been condemned to the purgatory known every bit Yelp's "not currently recommended" department. And y'all're probably bold that this is considering you're not paying for Yelp's PPC services. Let's find out if that'due south true or not.

For those who aren't enlightened, if you roll down to the bottom of a business'southward Yelp page, you'll see light grayness text that says "X other reviews that are not currently recommended."

"Not recommended reviews" are reviews that have been filtered out by Yelp and not counted.

If a Yelp visitor chooses to dig deep and read them, they can. Simply these reviews are hard to find, and they don't contribute to the business'due south Yelp rating or review count.

According to Yelp, their algorithm chooses to not recommend certain reviews because it'due south believed that the flagged review is imitation, unhelpful, or biased. Nevertheless, some reviews are filtered but considering the reviewer is inexperienced, non attuned to the tastes of most of Yelp'south users, or other concerns that accept nothing to do with whether the Yelp users' recounting of their experience is authentic. Yelp says that roughly 25% of all user reviews are not recommended past the algorithm.

While Yelp at least admits that reviews may be filtered out simply because the reviewer isn't a frequent Yelp user, there's still a lot that's unclear. That'southward a problem, because the vagueness of this process could provide sufficient cover to muffle bias or unethical behavior on Yelp'southward role.

What actually determines whether a review is flagged past Yelp's filtering algorithm?

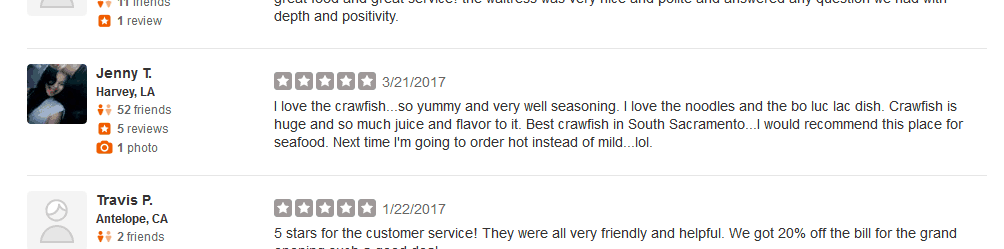

If you have some time to scroll through a few Yelp pages, you lot'll encounter reviews left by people who have written one review and don't even have a contour image, while reviews from more than established Yelp users end upwards in the dustbin.

Why is the review higher up written by a user with 52 friends and 5 reviews filtered out, while the one below makes the cut?

I've had to deal with Yelp issues when working with clients, and have written most Yelp in the past (encounter my commodity on "Dealing With Fake Reviews on Yelp"). In contemplating the many frustrating issues with Yelp, I've long wondered if information technology would be possible to determine whether a given review would be more than or less likely to be filtered out by Yelp's algorithm.

The core of that question is, what does Yelp consider to exist the critical components of a review'south trustworthiness? I decided to try and detect out.

What Yelp doesn't want you to know, and for good reason.

There is a fundamental limiting factor in any analysis of visible versus filtered reviews—you cannot expect at the user profile of someone whose review is non recommended. It'due south not clickable. So any comparison of the ii classes of reviews can't incorporate in-depth profile data—time spent on Yelp, their "Things I Love" list, whether a person has chosen a custom URL for their Yelp profile, etc.

There'southward a reason for that: Yelp doesn't desire people to know how their algorithm works. If we knew exactly how it worked, then nosotros could game information technology. This significantly hampers the ability of an outsider to penetrate the machinations of Yelp's algorithm.

However, in that location are a few things of which we're pretty certain, only which we tin't analyze in a meaningful manner:

Showtime, do not ask customers to write a Yelp review while they're at your business.

Yelp's site and mobile phone application can easily determine your physical location when you submit a review. If you submit a review while you lot're at the business's location, Yelp will be able to tell, and it's extremely likely that they'll filter the review. A good manner to get effectually this is to send a follow-upwards electronic mail to the customer a few days after you aid them, asking them how their experience was, and to leave a review for y'all if their experience was positive.

Don't provide customers with a straight link to your Yelp page if you're request them to review your concern.

Yelp tin can wait at the referring domain name, and if they see that the person reviewing Doug'south Fish Tank Shop was referred by dougsfishtanks.com, they'll know that the customer was specifically referred by yous and filter the review. Instead, but say, "Please visit Yelp.com, wait up our business, and leave us a review." This eliminates the suspicious linking that would otherwise lead to the review beingness filtered.

The timing of reviews matters.

If y'all concur a 24-hour interval-long promotion during which you offer customers some sort of deal if they exit a positive review for your business on Yelp, what Yelp is going to run into is that a business which had previously received just a scattering of reviews over several years is of a sudden getting multiple reviews on the aforementioned day. That's going to look simply a teensy chip suspicious, and all of those reviews are going to finish up on the Island of Misfit Reviews, along with all of the other filtered reviews. If you insist on having some sort of promotion, spread it out over fourth dimension. Make it part of your standard follow-up communication, equally suggested above, rather than some sort of special event that immediately puts Yelp on high alert.

We can't attest to the higher up based on statistics and assay, considering Yelp keeps that data in the digital equivalent of Fort Knox. Just based on experienced, we tin can infer that the above is true.

Equally a result, my assay is restricted to but the data that's publicly available to anyone who decides to spend a few hours itch through Yelp with an Excel spreadsheet. Essentially, my analysis assumes that all of the reviews that I looked at were left past honest, hostage individuals who weren't coerced by business organisation owners or acting on agenda.

So, with that assumption in mind, what determines whether a Yelp review is filtered?

Designing a data analysis of Yelp reviews.

Ultimately, I chose to focus on five review variables: Whether the review's author has a profile image, the number of friends they have, the number of reviews they've written, how many photos they've submitted, and the rating of the review. I opted to choose five random businesses in the local Sacramento area: a restaurant, car repair shop, plumber, clothing store, and golf game form. I would collect this data for each and every visible and filtered review for these five businesses, and see if the comparisons made anything clear.

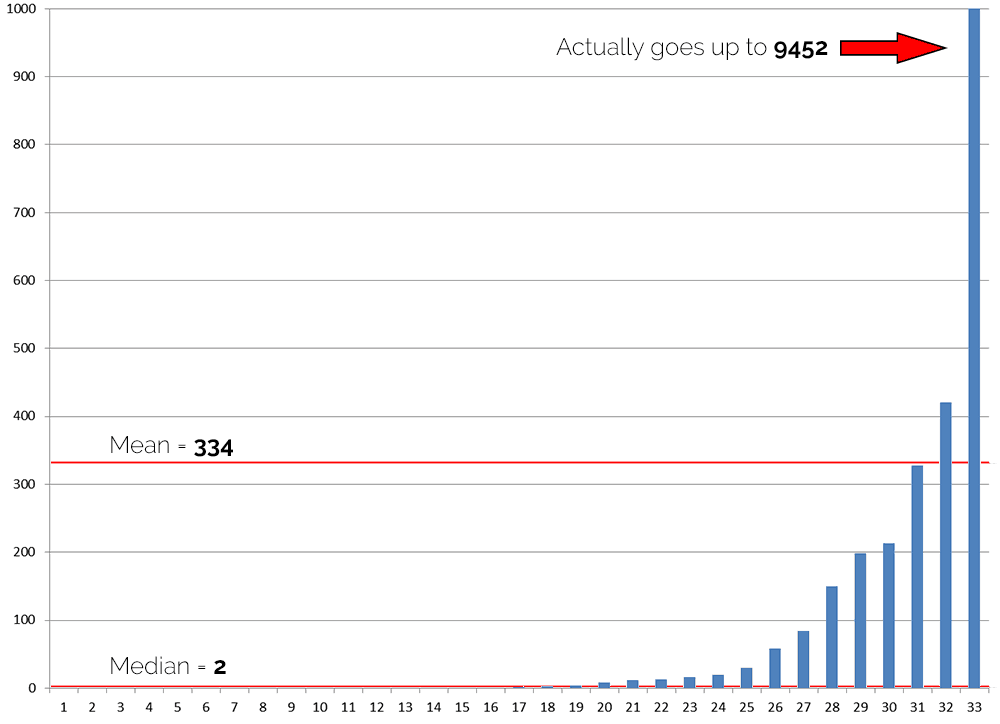

Now, in that location is a complicating factor for whatsoever comparison of Yelp reviews. In a phrase: power users. While many Yelp users are very easygoing, merely leaving a pocket-sized handful of reviews, instead primarily using the service to view reviews left by other users. Simply there is a small a coalition of super users who contribute a LOT of reviews to Yelp.

This isn't an issue when it comes to looking at an average rating score. Whether y'all're a super user or a novice, your ratings go equal weight (unless your review gets filtered out), and a single rating can't swing things much, because at that place's a maximum of 5 stars.

Only when it comes to variables that don't have a maximum value, things can get a niggling crazy. For example, one business concern I looked at had 33 reviews. When I took a look at how many photos each user had previous submitted to Yelp, I institute that while most users had contributed cypher or very few photos, one user had submitted 9,452 photos to Yelp. Expect at this graph of each user's photograph count, it's cool (keep in listen that the scale of the graph maxes out at 1,000 photos):

This presents a serious trouble. A single person skews the boilerplate to an cool degree—only two users' photo counts exceed the hateful. Information technology's like having the valedictorian in your math grade. They completely wreck the grading curve.

With this in mind, for all other variables as well Yelp rating, I chose to use the median average. For our purposes, a median is really useful considering it gives usa a number where half the users in a group cruel below that number, and one-half of the users are above it. The median is the proverbial C student, smack in the middle of the demographic.

With this in mind, the analysis below relies on the hateful averages of user ratings, and the median averages of photo counts, review counts, and friend counts. I also compared the percentage of visible reviews that were left past users who prepare profile images, versus the number left by users who didn't practice so.

In each comparison, the first effigy will exist from the visible reviews, while the 2d volition exist from filtered reviews.

Average Yelp Rating

This wasn't nearly as heady equally I expected (and hoped) it would be.

- Restaurant: 4.1 vs four.1

- Auto Store: iv.4 vs 4.1

- Plumber: 4.four vs 4.1

- Clothing: iv.3 vs 4.ix

- Golf game Grade: three.2 vs 3.6

In 2 cases, the average score for visible reviews was greater than that of filtered reviews. One time, they were equal, and in two cases, the filtered reviews' average rating was greater than the visible review ratings.

This is the sort of fairly random distribution that you would await if Yelp's algorithm didn't accept the rating into account. Basically, Yelp isn't stealing your five star reviews.

Percentage of Users with Profile Images

These days, social media has a huge touch on business and culture. Consequently, it has go imperative to understand who a user is in club to have a better understanding of their viewpoint.

With this in mind, information technology's easy to see how Yelp might be more mistrusting of an anonymous user who doesn't add a profile photo, versus someone who does. And it appears the data supports this assumption.

- Restaurant: 71% vs l%

- Auto Shop: 67% vs 62%

- Plumber: 49% vs 22%

- Clothing: 88% vs 33%

- Golf Course: 88% vs sixty%

There is a very clear trend here. With the auto shop the difference is pretty small, merely in every case visible reviews were more than likely to have contour images associated with them. Aside from the auto shop, at that place was 21 signal or greater gap between in the use of profile photos in visible reviews and subconscious reviews.

Inside my data sample, the overall percentage of visible versus filtered reviews with contour images was 71% versus 45%. The pretty clear takeaway from this is that the presence of a contour epitome does have an touch on review filtering.

Number of Yelp Reviews Posted

The number of reviews posted past a Yelp user does appear to significantly impact the visibility of their reviews.

- Eatery: 6 vs ane.v

- Auto Shop: 7 vs ane

- Plumber: seven vs 2

- Wearable: 10 vs 2

- Golf Form: 36 vs 2.5

The difference here is pretty stark. The plumbing business had the smallest gap, and even then the median visible reviewer had posted three.5 times the number of reviews as the median filtered reviewer.

Looking at the raw data seems to reinforce the decision that review count is strongly factored into Yelp's algorithm: the five highest review counts for the 66 filtered reviews I looked at were 59, 20, 19, 9, and nine—but four.v% of reviewers with more than five reviews were filtered. Once a user'south review count is in the high single digits—unless they've done something to make Yelp actually cranky—their reviews are almost guaranteed to evidence upward.

In our personal experience, nosotros have seen reviews which had been filtered for months or years suddenly released from purgatory without explanation. Based on the information in a higher place, it seems likely that the reviews were unfiltered when users finally posted enough reviews to make Yelp happy.

The takeaway here is to not just encourage your customers to exit reviews for you, but to do and then for other businesses as well; to be more agile in reviewing their local customs's businesses. Once they get by a total of virtually 6 or vii reviews, it's very likely that all of their reviews will survive the algorithm's wrath.

Number of Yelp Friends

Information technology appears that the number of Yelp friends that a user has also impacts the visibility of their reviews, but the correlation is a bit noisy when you dig deeper.

- Restaurant: xv.5 vs 2

- Auto Shop: 1 vs 0

- Plumber: 0 vs 0

- Habiliment: vii vs 0

- Golf Course: 7 vs 0

In looking at the averages, at that place'south definitely a gap. All the same, in looking at the raw information, x of the 66 filtered reviews were written by users with 20 or more than Yelp friends, with 7 of them having more than than 35 friends. A pretty pregnant clamper of the filtered reviews were written past social collywobbles

It appears that while the friend count does have some bear on, it's not nigh as determinative every bit the other factors described above. The takeaway is that having Yelp friends helps, but can be outweighed past other factors

Number of Photos Posted

On the surface, the number of photos posted by Yelp users doesn't appear to have a profound impact on review visibility…

- Restaurant: 4.five vs 0.5

- Car Shop: 7 vs 0

- Plumber: 0 vs 0

- Clothing: 0 vs 0

- Golf Course: 2 vs 0

Obviously, there are no instances in which users with filtered reviews averaged more than photos than those with visible reviews. But for two businesses, the medians were both were 0, and the golf game form comparison isn't terribly compelling either.

However, the raw data tells a very interesting story: at that place are very, very few filtered reviews posted by users with significant photo counts. Of the 66 filtered reviews, the top five photo counts were 26, 21, half dozen, v, and 2. That'south a seriously drastic autumn off. 94% of the filtered reviews were posted by users that had submitted 2 or fewer photos to Yelp.

The takeaway here is that while a lot of Yelp users don't post photos, posting even a small handful of photos has a pretty practiced likelihood of getting a user's reviews out of purgatory.

The Final Assay of Our Petty Yelp Experiment

To compact the couple thousand words or so above into something short and sweet, here's what I think. First of all, I don't come across evidence that the rating of a review has an impact on whether a review is filtered or not.

Secondly, the other factors in play all definitely have some sway on whether a review is filtered. If I were to rank these iv variables in terms of importance, taking into account a user's time investment (it'd be nifty if every user wrote 10 reviews, but that takes a lot of time), this would exist my ranking:

- Profile Image

- Photos Submissions

- Number of Reviews

- Number of Friends

Setting a profile image and uploading a couple photos of a business requires very little time, and the data indicates that these have a significant impact on the likelihood of a review beingness visible. After that, the quantity of reviews is very of import, merely the magical threshold where you're almost guaranteed to not be filtered is fairly high—around 6 to 9 reviews. The number of friends matters as well, only doesn't outweigh the factors above (and for users who aren't inclined to socialize on Yelp, it's going to be tough to convince them to do otherwise).

The purpose of this analysis was to provide some actionable advice for business owners. So, if you're managing a business concern and you lot want all of your reviews to show up, the data suggests that you don't need to target Yelp super users. You just demand to encourage your loyal customers to non only write a positive review, but also to accept a couple minutes to add a contour paradigm and have and submit a couple photos of your business organization. Then, maybe nudge them to go out reviews for other businesses also to get their review count up. These little extra deportment can significantly heighten the odds that their review of your business organization will show upward.

Source: https://www.postmm.com/social-media-marketing/yelp-reviews-not-recommended-data-analysis/

0 Response to "How Long Do Yelp Reviews Take to Post"

Post a Comment